Quick search

CTRL+K

Quick search

CTRL+K

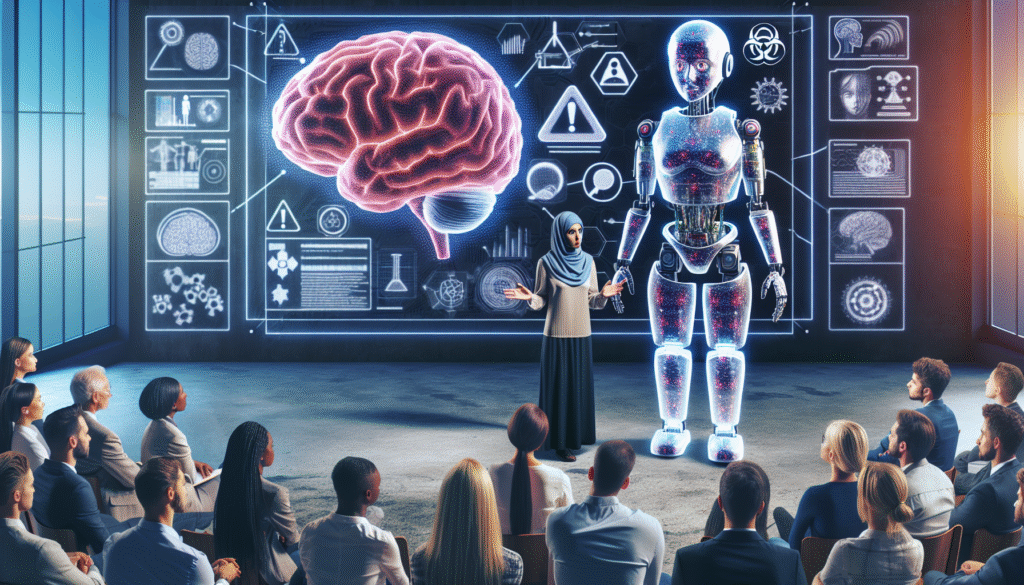

Professor Guillaume Thierry, a leading neuroscientist at Bangor University, urges the public to maintain a practical perspective on artificial intelligence, viewing them as highly sophisticated tools but not beyond that. He emphasizes the danger in ascribing human-like qualities to AI, suggesting that such a perspective could mislead us about the nature and capabilities of these systems. The narrative often presented in media and tech circles anthropomorphizes AI, giving them attributes like thought, intention, and emotion, which are inherently human characteristics, potentially clouding our judgment and understanding of these machines. Thierry cautions against being swayed by depictions of AI in popular culture, which often resemble human-like robots capable of complex thought and moral reasoning, approaches that blur the lines between technology and humanity. Coining AIs with terms such as ‘thinking’ or ‘learning’ in literal terms can skew public perception, leading to unrealistic expectations or unwarranted fear about their role in our lives. Instead, Thierry advocates for a more grounded approach, recognizing AI for their programming and capacities determined by their design and the intentions of their developers. He calls for more critical engagement with how AI is discussed and presented, urging for a clearer demarcation between the functionalities of AI and human attributes. Ultimately, by stripping away these anthropomorphic illusions, we can better understand and interact with AI systems in a way that leverages their capabilities without overestimating their autonomy or potential.

No results available

Reset